Activity

-

Christine Martin posted an article

Embrace the Ethical Implementation of Digital Identity

It is more important than ever to take back control of our identities. see moreI just had two different but related things happen with my 18-year-old daughter regarding her identity…

This week, I took her to the DriveTest Centre to get her G1 (driving license). Since she’s 18, I left it up to her to ensure she had all the necessary documentation. She brought her Ontario Health Card and Canadian Passport. When it was her turn, she went to the counter and presented her documents. The representative looked over the papers and let us know the passport was expired, and thus she could not accept it. She asked if she had another piece of identification, like a birth certificate. Of course, she did not have this with her. As we left the DriveTest Centre, I mentioned that we wouldn’t have had this problem if she had a digital wallet that could store her identity documents. She would have had all her credentials on her phone to prove who she was. She told me, in short, that it wasn’t a better alternative because she just watched a movie on digital identity, and we are all going to turn into tracked and controlled robots of Big Brother if we let that happen.

My daughter opened a new bank account online, but she still has to present herself in person to finalize things. With a digital wallet holding credentials and verification via biometrics, she could have completed that step online and had access to the new account immediately. Why does the bank offer the option to open an account online?

You’re already at risk.

Maybe it’s because of the nature of my job in decentralized identity consulting, but lately, I’ve been seeing a lot of conspiracy theories on social media about Self-Sovereign Identity (SSI). People criticize the way it’s being implemented and warn about the negative consequences it will have. It’s almost as if people don’t realize that organizations are already monitoring and influencing us and that Google and social media algorithms have been instrumental in this.

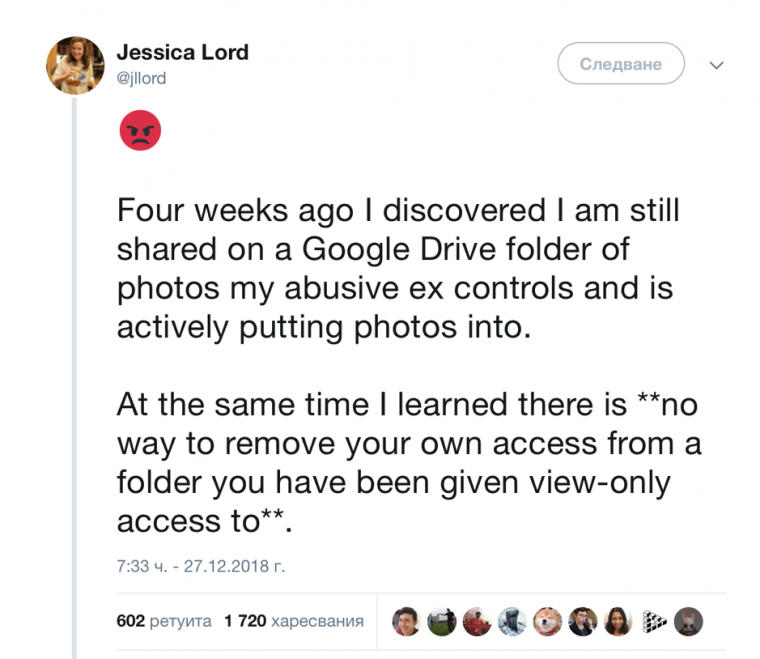

Right now, Facebook owns your identity and essentially decides what you see in your feed; and Google tracks your every move. These companies claim our data as their asset and make money off it.

“Many acts of ‘digital misdirection’ are happening before our very eyes every day, and we are starting to become more aware of them. Every action we perform online has become a piece of data which is used to coerce and constrain our digital experiences. We see it in the ads that show up across all our devices following an Internet search, in the ever-narrower set of content we’re shown on our social media sites, and in the increasingly compelling, and sometimes spooky, product recommendations we receive. This digital misdirection goes on to the point that we begin to wonder whether we can still exercise any free will online at all.” – The Rise of Surveillance Capitalism

What if you could monetize your identity? What if you could share extra preference data with Facebook and allow them to share that data with a third party for a fee? You could charge $0.50 for every additional set of preference data you share. Self-Sovereign identity can give you control over your data and generate passive income. Why wouldn’t you pick this option?

How can we trust them to be honest stewards of our data?

A lawsuit has been filed against Google that stems from investigations dating back to 2018 by Princeton University and the Associated Press. The lawsuit alleges that “Google falsely led consumers to believe that changing their account and device settings would allow customers to protect their privacy and control what personal data the company could access. The truth is that contrary to Google’s representations it continues to systematically surveil customers and profit from customer data.”

What else are these media giants doing that we haven’t figured out yet? How Bad is the Global Data Privacy Crisis? And you want them holding your information? I’m confused.

Platforms like Facebook and Google aren’t fond of losing access to people’s data; having less control over the user data makes it far less valuable for monetization. I’m not sure why people aren’t more concerned with this aspect. I can’t help but come up with my own conspiracy theory: the Facebook algorithm suppresses positive news and advancement in SSI while pushing misinformation. With digital identity, you (the holder) will be able to control your identity and decide which credentials to share with whom. That’s a significant loss for big tech.

SSI Architects care about privacy and security

I saw a Facebook post shared with a photo of a copy of The World Economic Forum – Advancing Digital Agency report with the quote, “Digital ID, it isn’t just a rumour, people. WEF wants to control everyone’s life. SHARE“. The WEF report is more about protecting users, the challenges of broken trust, and data intermediaries instead of controlling everyone’s life. There are many benefits to Digital Identity, particularly with vulnerable and marginalized groups (refugees).

We care about privacy and security; we have the same concerns. Organizations like Evernym/Avast want to embed eIDAS in their products, but only if it addresses these four problems and maximizes opportunities.

Something crucial for laymen to remember is that governments cannot build and implement these frameworks without help from the private sector. That includes SSI consultants like us here at Continuum Loop. We’re regular members of society; we have friends, family and children that we care about and want to protect: now and in the future. We are involved because we care and are aware of the negative implications and aspects; we can help mitigate these factors, build these frameworks, and make them beneficial for all.

Hold, own, and control your credentials/identity.

Digital ID is nothing new; it’s been around for a while in one form or another. However, the COVID pandemic has caused a “digital acceleration” event where our reliance on technology has catapulted forward. The pandemic has accelerated the adoption in many ways, like the increased use of QR codes and contactless payment to mitigate the risk of exposure to the virus. In particular, it has helped to raise awareness of the need for such a system and its benefits.

You will take back control of your identity and hold it. Not Facebook, not Google, and you will decide what credentials to share on a need-to-know basis. We don’t have to be scared of the shift; we have to ensure the architecture is built ethically for all.

The Privilege of Hesitation.

We are privileged to be able to be so critical of these emerging technologies. We take for granted that the college or university we graduated from will always be there or that our government institutions will always be in place and functioning to provide us with the services we need. I can’t help but wonder how a current refugee, who had no time to take paper documents, would feel to have the ability to easily prove their identity while starting over in a new county. All we have to do is look to Ukraine and see why centralized Identity systems can cause a problem.

Many Ukrainians have been displaced and need to apply for new documents to be able to travel and access services in other countries. The centralized identity system can make it difficult for people to get their records. As different groups seek refuge, they face unique challenges. Many Ukrainians of Roma origin, for example, suffer discrimination in Ukraine and may not have any documentation indicating their identity or citizenship. Being undocumented as you flee conflict and navigate foreign countries can lead to many dangers like human trafficking. Desperation can lead to refugees bribing government officials to get their documents.

In contrast, Estonia has a practical but highly-centralized digital identity system that makes it easier for people to access the various services they need. While it is centralized and questionable from a privacy and surveillance perspective, this system allows for secure and transparent transactions that make citizens’ and e-residents’ lives more convenient and secure. The Estonian government has been using this technology since 2001, and it has helped them become one of the most digitally advanced countries in the world.

While this implementation of digital identity is not ideal for many reasons, it’s a step in the right direction, and we can build from it. The flaws within the system (e.g. privacy, centralization) can be handled.

Rebuilding Trust

These technologies cannot move forward without the general public’s adoption. Organizations must rebuild trust for this to happen. Those building the framework architecture are fully aware of this challenge; the general public has lost confidence in the way organizations hold and use their online information.

There are many possible ways to rebuild trust. One way is to give people more control over their information. With Self-Sovereign Identity, they can choose what information they share and with whom, and they can also see how their data is being used and change their settings accordingly.

Another way to rebuild trust is to ensure that the technology is secure. People need to know that their information is safe when shared online. Organizations need to ensure that they use the latest security technologies, Blockchain Technology, to protect people’s information.

Finally, people need to know that the organizations they trust with their information are reputable and honest. Organizations need to be transparent about using people’s information and their steps to protect it, and Verifiable Credentials will facilitate this.

In a world where corporations and governments are constantly harvesting our data, it is more important than ever to take back control of our identities. Self-sovereign identity is a new way of thinking about identity that puts the individual in charge of their information. We should embrace it and use it to create a more just and equitable society.

-

Lilian Tseggai posted an article

Interview with Janine Seebeck, COO, BeyondTrust

Janine Seebeck tells us all about her journey into the identity space see moreTell us how you got involved in the world of identity technology?

I started out working for PwC, so I’m an accountant by trade. As part of my journey through public accounting, I was sent out to California where I got involved in the tech industry, auditing IPOs during the dot.com boom years. I had actually started out working in the manufacturing sector in Cleveland, Ohio, but when the firm asked for people to go out west to help with the technology sector, I put my hand up! Why not? It’s a good mantra, I believe, especially for women: “Raise your hand and have a go! The more opportunities you can have to put yourself out there and learn new things, the more it helps to advance your own career.”

So, I headed for California and found I loved the world of tech – it was super cool, fast-paced, and a lot of fun. After a short stint back in Cleveland, I eventually transferred over to Atlanta, Georgia so I could stay within the tech sector. From there, I moved to work for a publicly listed company as their Corporate Controller. As I progressed in my career with them, I was offered the opportunity to move out to Australia. I had a really good mentor in my boss, who encouraged me to go for the role of Regional CFO in APAC. My husband and I decided to pack everything up, take our dogs, and move half-way across the world, where we stayed for 3.5 years!

On our return to the US, I went to work for another publicly traded software company as their Controller – and with the support of another great boss, I moved up to be CFO after a couple of years.

What has been most helpful to you in your career development?

The role of a supportive boss and mentor has always been critical for me. In my experience, the best ones are super-smart and are genuinely committed to seeing their people succeed. Interestingly, at this company, the CEO offered me the role of CFO just months after I had had my second child. What was huge about this is that he told me they wanted me to do the job even though they recognized that my family circumstances meant I really couldn’t travel much and would have other calls on my time. I think, as females, we often feel guilty that we have to divide our time between family and work commitments, so having someone recognize me as a “person” and not just an employee was really great.

My advice to others would be to look for the people in your business who act as advocates, who try to lift you up all the time, and who see you in the round.

And to CEOs and business leaders, my advice would be: “Look for the people with fire in their bellies! The people who want to grow regardless of their personal circumstances. And then… trust your team. You know they have the skills, so you just need to trust that they will find ways to manage their personal and professional lives.”

For me, that is really what leads to true inclusion.

Was the younger Janine an ambitious go-getter? Or did something happen along the way to spark your drive?

I have never been very good at just sitting still! I’ve always had a vision of doing great things. Now that I have a family, I am so appreciative of my husband – we really do share all the responsibility, and I couldn’t do it without him. When I had my first child, I did consider taking a back seat, but it was my Dad who said, “Are you kidding???” And, after a few months’ maternity leave, even my husband was saying, “You need to go back to work!!” For good or bad, I think my background and family instilled in me a need to be working – taking ownership and accountability, and pride, in what I do. And that’s a reason why I really enjoy working at BeyondTrust. I genuinely do believe they try to embody those same cultural values.

Do you think your working class background has helped you in some way to identify more with customers or users – and with other colleagues?

For sure. I think if I had not come from a more middle-class background, I might not have recognized the full diversity that exists in our society. And that is so important for anyone working in the identity sector. We have to stay really focused on the customer. Differentiation comes from thinking about the different ways a customer may want to buy or use your products. There is so much complexity in what we are trying to do in this sector that we really need to remind ourselves what it feels like to be the person at the end of the chain – the person trying to actually complete a service or a transaction.

How have you taken all these learnings into your role as COO at BeyondTrust?

What drives me today is that I know I can help people drive change. Whether that is seeing someone on my team move on to the next stage in their career or helping to transform something within the business. I am a big fan of change – it has been a huge part of my life, and I am not afraid of having to adapt. What I love is the fast pace of the world of identity, and I honestly believe that what I do can help our customers make changes that benefit everyone.

Everything that my teams do really is about stopping and asking, “How do I make this better for our customers?” Our company lives by a “customer-first” pledge, but on a day-to-day basis, we strive to answer the question, “How do I make this work?” rather than “Why can’t this work?” It keeps us all really motivated. In my career as an accountant, the focus was very much on moving in a very structured way through a process, ticking boxes as we went. Now, I’m much more likely to ask “is this box necessary? What would happen if it wasn’t included?”

What is the most important lesson you have you learned along the way?

I had a humbling experience earlier in my career that really helped change my trajectory. I was a Financial Controller, and I was given the opportunity to stay with that company and move into a new role in a new location. I really thought I was the best leader my team had had and was leaving them in a great place to grow from. But, in fact, my successor fed back to me that the team wasn’t great at all. They couldn’t take responsibility and were always looking to be “managed”. What that really taught me is that I had tried to mange them by always being there – always being helpful and picking up the pieces for them. I didn’t allow them to fail. It was one the hardest lessons I have ever had – basically, my leadership skills were not good enough, and I really felt I had let my team down. But from a personal growth perspective, it was definitely one of my greatest lessons. I now seek to “trust but verify” – I will always care deeply about my colleagues and want to support them, but that doesn’t mean stepping in to do the job for them when things get tough.

In short: when you leave a role, you need to be sure that each individual feels good about needing to step up and do the right thing.

In your opinion, what should we be looking to change in the identity sector?

There is a lot we should challenge. If I had a crystal ball, it would be a lot easier, but there are so many different components, so many vendors that it can be really confusing. What we hear from customers is that there are a lot of players in our space, and unified platforms or the better integration of products from different vendors is essential to success. This sector is so focused on giving people access to fundamental, sometimes life-changing services that we need to be thinking much more about collaboration rather than worrying about how we compete with other vendors.

At BeyondTrust, we do think a lot about how we stay one step ahead of the fraudsters and criminals who reduce trust in our sector. We need to focus on predictive technologies so that we can stay ahead of the game and make each end user’s experience much more relevant. The technologists are already saying there is so much more that we can do.

As a senior woman who has progressed through the technology sector, what are we doing well and what do we need to do better?

There are definitely fewer women, but there are more and more coming through. I don’t want to hire people who look like me - different backgrounds and opinions give me so much more. But you have to want it. We need to offer an inclusive environment that covers so many different backgrounds to ensure we get that diversity of voice.

It’s why I love Women in Identity – you are putting a spotlight on the women in our sector, highlighting that there are lots of opportunities in identity, and that you don’t just have to be a technology geek. There are women, yes, in Marketing and HR, but also in Product, Finance, and Operations!

You’re a sponsor of Women in Identity; how do you encourage your own teams to embrace diversity?

-

Recruitment – we focus on bringing in a broad range of candidates in the early stages and will continue to develop this.

-

Look for diversity of thought – encourage everyone to speak and listen to those voices that are often quiet.

-

My teams are already quite diverse in terms of gender and ethnicity. My own direct reports are 50:50 male and female. I hope that other females are encouraged by seeing me in a leadership role.

-

Often, it is the educational background that can stifle diversity. I went to a smaller private university versus a larger, more recognized, tier-one educational institution in the United States. I believe your college experience and degree are a part of the equation for success, but it’s what you do later on and how you handle life’s experiences that really counts. We try to look for drive, hunger, passion, and a match to our corporate culture—not just college grades.

What advice would you give the younger you – and others who feel under-represented in our sector?

-

Find a mentor. And, if you feel you can, offer yourself to others as a mentor

-

Put yourself out there. Spend time building relationships – see networking and those “water cooler” moments as part of developing you. Don’t just focus on doing the tasks you get paid for.

-

Know your boundaries. Be open and accepting if you have other responsibilities outside of work. Don’t try to hide them.

What are you reading?

With work and family commitments, I only have time for audio books when I’m at the gym, but I’m currently reading Impact Players by Liz Wiseman - which focuses on the themes of adaptability and how to be impactful in a changing world.

Follow Janine on LinkedIn and @BeyondTrust on Twitter

Read more about BeyondTrust’s sponsorship of Women in Identity at https://www.womeninidentity.org/sponsors

-

-

Lilian Tseggai posted an article

A review: Roundtable discussion on enabling borderless digital identity.

Tamara Al-Salim reviews the roundtable discussion at the Singapore Fintech Festival see moreAt the recent Singapore Fintech Festival (8-12 November), our own Tamara Al-Salim hosted and moderated a roundtable panel session on Enabling Borderless Digital Identity. The session explored the role of public/private partnerships in enabling an interoperable identity network. The session had great attendance and lively debates, the speakers were asked to share their views on Trust and how to innovate in a space of growing demand for interoperability and cross border recognition of people, credentials with self sovereignty when consenting for access to their information.

Common threads and differences were highlighted in the approach to delivering government identity projects, the approach of government mandate delivery seemed to attract higher adoption due to the requirements placed on accessing services by users, the pandemic has also played a catalyst role in this where the government ID systems were used for contact tracing or as a tool for two factor authentication. Other governments chose to partner with private entities to run the program and its delivery for the country, this created a start in single sectors, in this case finance before shifting wider as the benefits are realised; it allows a clear segregation in the delivery from being government lead, but also allows the trust levels to be higher for the end users as the service is government endorsed.

The speakers shared insights on the significant role Digital Identity plays in the growth of digital economy; it drives inclusion by supporting increased access to public and private services for people, businesses and public institutions; it creates trust in a non-physical environment to enable everyone to interact and transact in a way that's authenticated and safe; it drives open markets and creates level playing fields for innovation in the interest of growth and choice for users; and finally, it lowers the cost by finding ways of delivering digital services at scale.

With the right foundational digital infrastructure, digital identity, authentication and consent, interoperable payments and data exchange, we're able to create digital ID systems that can work across countries with trust, and limit independent verifications in the process.

-

Francesca Hobson posted an article

Is consumer identity different from citizen identity?

Susan Morrow explores whether citizen identity systems like the UK’s Verify initiative can give us a see moreI was heavily involved in the UK government Verify scheme for several years. It was a challenging service to build as the government designers behind the initiative had a very specific set of challenges. Firstly, they had to design for the type of wide demographic represented by an entire country. At the same time, the service had to be secure from fraud. This was and is a fine balancing act. It takes the usability vs. security debate to a whole new level.

Identity theft is a scourge of modern times. Javelin Research on identity theft and fraud has reported some interesting changes between 2017 to 2018. Although the overall number of victims has decreased in 2018, this was mainly due to a decrease in card fraud. However, overall there has been an increase in accounts being opened in victims’ names. As someone working in the field of identity management, this concerns me greatly. The true cost of online impersonation has only just begun, and the industry needs to build bridges to stop this, now.

I believe that a way forward is to bring together the knowledge that government and commercial services, like banks, already have.

What have we learned from citizen identity?

There are a number of citizen identity schemes either in full production or in pilot stages across the world. Government needs to allow its citizens to access government online services to keep up with technology changes, reduce costs, and service citizen expectations.

But many government services have a high value. Online tax services, for example, have already been victims of fraud. A look at the IRS ‘dirty dozen’ list of scams used to defraud the U.S. tax system shows what they are up against.

Getting that heady mix of “identity for all” within a hardened identity framework is no mean feat. The UK government’s attempt at doing this has been heavily criticized. The UK’s National Audit Office (NAO) published a report that looked at the shortfall of Verify. These shortfalls are mainly a mix of cost (always an issue for government) and ‘match rates’.

Match rate refers to the ability to ‘verify’ an identity. Verification, in the case of Verify, and other government identity services, means using third party services, like Credit File Agencies, and ID document checking services, to check an individual. The output from these verification checks, along with the credentials used to authenticate the individual, determine the ‘level of assurance’ of that person or their LOA.

In the case of Verify, there were two levels that could be achieved, LOA1 and LOA2.

The idea of ‘levels of assurance’ is not confined to the UK government. NIST originally came up with 4 levels of assurance but recently ‘retired’ this concept. This is what NIST says in Special Publication 800-63, Digital Identity Guidelines:

“Rather, by combining appropriate business and privacy risk management side-by-side with mission need, agencies will select IAL, AAL, and FAL as distinct options. While many systems will have the same numerical level for each of IAL, AAL, and FAL, this is not a requirement and agencies should not assume they will be the same in any given system.”

IAL, AAL, and FAL, are verification, authentication, and ‘strength of federated assertion’ respectively.

NIST, in my opinion, are very sensible in doing this, but could have gone further. I have always argued that strict LOA is based on a highly prescriptive set of requirements that are not flexible enough to service modern ID needs.

The reality is that LOA is only a subset of an identity and it’s the underlying attributes that are needed to do online jobs.

So, this now brings us onto how citizen ID, made up of often static, inflexible ‘levels of assurance’ can be molded to the needs of modern consumer-centric commerce?

Waste not, want not: how LOA is part of doing online tasks

Verify was (and is) a costly exercise for government to bear. So, one of the ways of offsetting this cost is to allow commercial entities to utilize citizen IDs created under the scheme. Makes sense? If a user is able to get a Verify identity or any other government identity, they will have been through a tough user journey. However, commercial organizations have their own, unique set of needs. Simply having a LOA and associated attributes may not be enough.

- A bank, for instance, will need their own set of KYC/AML checks

- If a person applies for a financial product, a LOA wouldn’t have the right financial data to complete the task

- If you apply for a job online a government identity wouldn't have your professional certifications embedded. I could go on; you get the idea.LOA is a useful guide to the verification status of a person. But it is only one part of the data needed to transact. But, over 4 million UK users have a UK Verify identity. It would be a crying shame to not take advantage of these, already verified, identities.In this case, LOA can be thought of as its own attribute and can be used to build friction-reduced, but with assurance, identities.

In Hub we trust

The trick is in how you use the government identity. Most of them are based on the SAML 2.0 protocol, although some will move over to OpenID Connect at some point. This means that if you want to use the government identity for your commercial service you need to consume that protocol. In addition, the ‘flavor’ of the protocol may not fit in with the expectations of your service.

The use of verified IDs and other identity accounts can be used by commercial services - but it has to be part of a wider ecosystem. The days of silo’ed online identity are numbered and are may even be holding us back.

Having your identity cake and eating the attributes can be afforded by going back to the idea of an extended identity ecosystem. A hub or proxy is a component of the wider identity ecosystem that provides the switch to connect up the relying party services and the other parts of the system, like the identity providers. For example, a government identity could be pulled into a banking system through a hub - the hub translating the government identity for the bank service. The bank may still need some specific attributes, but the verified government identity provides a good backbone and can even help to reduce the friction in creating an online bank account.

Conclusion

Governments have been at the forefront of verified identity systems. They have designed IAM with wide-demographic users in mind. But they have also had to finely balance anti-fraud requirements with this. Governments cannot afford to junk their identities, and instead, need to allow their reuse in a commercial setting. A direct use case may not always fit the needs of commerce, but a hard-won high level of assurance identity can help to augment online identity and data needs of commercial services. It makes sense then, to look at ways of facilitating the use of government IDs in commercial settings, but we need to consider LOA as an attribute in its own right rather than representing a digital identity in its own right.

Author

Susan Morrow

Having worked in cybersecurity, identity, and data privacy for around 35 years, Susan has seen technology come and go; but one thing is constant – human behaviour. She works to bring technology and humans together.

Find her @avocoidentity

-

Francesca Hobson posted an article

Savita Bailur and Hélène Smertnik: Researching Women and Identification in a Digital Age

What do you do in the industry? What does caribou digital do? see moreWhat do you do in the industry? What does caribou digital do?

Savita: I’ve worked with Caribou Digital for 4 years now. As part of the research team, I lead research projects of experiences of digital life - we’ve worked on overall online use by lower income demographics in emerging economies (what we used to call “ICT4D”!), digital financial services but also increasingly “identification in a digital age” - this might be a good time to say we prefer to use the phrase “identification in a digital age” rather than “digital identity” - see our colleague Jonathan’s great piece on the terminology and why).

Hélène in Kakuma refugee camp in Kenya

Just the other day, we realised we’ve now conducted fieldwork in over ten countries on “ID” (to use the shorthand): a few of those are Kenya, Uganda, Bangladesh, Côte d'Ivoire, India, Lebanon and Thailand, working with clients such as Omidyar, Aus Aid, World Bank, UNICEF and the Gates Foundation. In addition to conducting the research, we share our findings and advise on relevant strategy and policy both in the public and private sector. Caribou Digital has a much wider scope of work (all around supporting ethical digital economies in emerging markets), which you can see on cariboudigital.net but that’s just us on the research side!

Hélène: I’ve been working with Caribou Digital for 2 years, conducting research and leading ground work, mainly on identification questions in countries across Africa and Asia. I don't really have a typical week, but a cycle of work through projects. It starts with from pre-field research - working with Savita on the framing of the research and setting everything up for ground work including finding local partners to the fieldwork itself and then post-field wrap up.

How do you determine where you run the research?

S: We start at a high level determining the intent and scope first for clients (what is it we are trying to find out?), and after we work on the details collaboratively. We think about the demographics to work with - we know qualitative research (which Helene and I largely do) is not meant to be representative, but we do need to think about who we talk to - a typical cross-section may be “expert” interviewees, middlemen/women who are intermediaries (e.g. mobile money agents) and the “end users” either in focus groups or more in-depth interviews. We don’t really like the term end users (we are just humans!) but I guess that’s the shorthand. Then Helen mobilises the teams. She’s fantastic at finding the people on the ground and building up trusting relationships with people. We always try to do a pilot study so we can test and refine questions and demographics. Ethics are paramount to us so we make sure we go through a code of conduct with partners, and consent with respondents.

H: Savita has such a wealth of experience in the topic. She knows what the important issues are which haven't been thoroughly researched yet and sees where processes are inefficient.

What do companies look for when they come to you?

H: They are not always companies - they can also be foundations, governments, NGOs etc. Often they come from a perspective of wanting to know more about end users’ experiences, as often they haven't been looking at the issues at stake at the same granular level that we get with our qualitative research. With Unicef it's been a unique piece of work looking at youth and adolescence and the overlap between identification and identity. I was interviewing a child who was 10 years old in a refugee camp in Lebanon, who was acutely aware of what ID is and the need for documents. I've found the more privileged the child the less aware they are of identification documents and their use. This child told me: 'it's the document that my dad takes to work with him every day', as this is a key enabler of their rights to move around in the country. That's generally not an issue for children in more privileged communities.

S: It's certainly a challenging and delicate subject to address when doing field research. To find out how access to identification affects people on the ground requires good rapport and a large dose of empathy, which Helene is brilliant at. They key is asking questions without being intrusive - imagine if some random person came and started asking you questions on your identification documents - how would you feel?

What areas are you working on next then?

S: Well, we’re just wrapping up our in-depth ID research in Bangladesh and Sri Lanka on women, work and ID, funded by Aus Aid. It was part of the Commonwealth Identity Initiative with GSMA and the World Bank. Next we’re starting research with Gates on how digital financial service principles they established (Level One) may have a different impact on women, including interoperability in mobile money - I do think this will also bring up gender and ID issues, like around KYC (whose ID is used to register a SIM?). We’ll be working in Kenya and Cote d’Ivoire.

From your research you’ve identified 5 fundamental barriers of access for women. You must see great variation in use of identification between countries depending on the availability information, access, ownership, societal expectations and intersectionality?

Savita in Abengourou, rural Côte d'Ivoire

S: Yes, these barriers vary between countries, depending on everything from infrastructure to social norms.That’s why we keep saying you can’t just go in and dump a new “digital ID” system - you have to do some user research. For example, we have enough evidence now that people get nervous around biometrics, especially women in some cultures when they are touched - can we address that issue? Or that even if women have their own ID, it may be the men in their families who keep them - how do we address this

H: We saw in Bangladesh that often women didn't have the time to go and access services, as they were looking after their families, or they didn't have the means to travel. Depending on the type of work they’re doing, this may or not be an issue, but it does become a barrier at some points.

What will you be speaking about at ID2020?

S: ID2020 reached out to me to do a keynote, which was nice as they come from a more private sector angle (e.g. the alliance includes Accenture, Microsoft, Rockefeller and many others). So it’s a good audience to take our “end user” research to. Our work brings forward end user research and adds the perspective of the human voice. We’re telling the story of Humans of ID, layering it in with the context of increasingly digital societies in emerging markets. It’s a story that all of us can relate to, as identity and identification go back to the beginnings of humanity.

What brought you into this field?

H: I still find it fascinating that we all live and breathe identity all the time. I now notice it everywhere - whether it’s a clothing collection launch on Instagram called ‘ID’ or in films like Capernaum. We all have a unique identity story that defines us and you can see that in culture globally.

S: All of us who have moved around the world can relate to the identification issue (I was born in India, moved to the UK, now in the USA, and at various points had all that change codified in documents and credentials).

There are so many stories about identification in the bureaucratic sense and how it crosses over with identity - the film Lion for example, or the Tom Hanks The Terminal, or the book Educated (Tara Westover) where she grows up in an American family without a birth certificate and is home schooled, so she has no ID at all but how she navigates that. Two powerful pieces of journalism struck me just this year. One was about an Iraqi boy who is reunited with mother after years, thanks to different types of identification. Another was Azeteng’s story of human trafficking through West Africa. When his Guinean friend Sekou is murdered by the human smugglers, Azeteng keeps his ID. The journalist asks him why he keeps Sekou’s ID. He says: “that is someone’s son, someone’s brother, who knows, maybe even someone’s father,” he said. “I asked myself, how will his family know that he is dead? So I am trying my best for the family to be aware.”

We are becoming such a globalised society and many are stateless for one reason or another - if you're a migrant worker who’s newly arrived in a new city, but don’t have the right ID you can be completely isolated from applying to jobs (look at the IDNYC by the way - really interesting case). We often take our access to services and help for granted, but the additional challenge is a lot of people don't have the time or ability to sort these issues out for themselves.

It must be striking seeing how ID isn’t just a means to accessing essential rights, but also impacts on heritage?

S: Absolutely, on Sri Lankan tea estates workers used to be given numbers not names when they were born. Honestly, a lot of the identification issues are legacies of colonialism and the carving up of countries - the complex case of Cote d’Ivoire for example where the Burkinabe communities have settled in Cote d’Ivoire but are not considered Ivorian. Or what is happening in Assam. Or even the appalling Windrush case - we need to face up to the fact that identification is also a question of power with terrible consequences. And we cannot make the same mistakes again and again by classifying people in a particular way.

H: You start to see the impact of these problems through generations, for example where parents are displaced or lose their identification documents. The barriers faced from your own access to ID often then has an impact on your children’s experience. Consistently we see identification is an essential enabler for social and economic inclusion, though sometimes it isn’t thought about as such and taken for granted.

When you present to private sector organisations, what do you find surprises people the most?

A woman registering for a bank account in Assam, India for Caribou Digital’s Identities Research

S: Often the private sector have a totally different angle as their primary concern with identification for a single task or service. It’s a one time necessity and it’s not their job to think about the ethical issues that may arise from how people use it.

H: We question the role of the private sector in our research - are they responsible for people’s accessing and using ID? Take the case of Sri Lankan online companies we spoke to - they may facilitate online work, like people creating a webpage or managing social media for a client. We’ve seen that these companies may not check the ID of the individual that signs up to do the task, unless it requires dealing with sensitive information. Is it that company’s job to educate the people who work on the platform about the importance of ID? Similarly, is it the role of factories to make sure that their employees have ID or is it better that they employ them as the employees need the money? Some companies mentioned they would try to create more awareness around financial inclusion - encouraging them to get access to formal bank or mobile money accounts.

S: So we come back to the question, why do we need ID? There are a number of conversations going on about standards and interoperability, but someone pointed out to me the other day that passports are an universal system, but birth certificates are not. You can't check a birth certificate beyond making sure the hospital is real, as really you could create a fake one at home, and then a passport is often built off that. The other issue we saw is with voter ID, which is generally issued when parties are campaigning for an election - so in rural India, a political party may happily make you 18 so you vote for them. There's very little standardisation across the board, particularly concerning initial ID.

H: We're very conscious in the recommendations that we make to organisations that we think identification enables inclusion and growth, however, once the need for ID becomes mandatory, you may end up excluding people.

S: Yes, it raises the question, If you've got no ID then what happens?

You covered that experience in a number of your articles, what did you find?

H: It really varies. In the research we just finished in Sri Lanka, there were a couple of people who didn't have ID. One used their sister’s ID until they could pay for the lawyer to get their ID sorted. The other person was a gentleman who was just getting by with cash and operating off the grid. In Bangladesh there were far more people “off the ID grid” and using other people's ID when they needed to access services.

In Sri Lanka, Gayani (left) holds the old laminated paper ID and Rangala holds a new smart ID

S: You see a number of systems and means of access are interdependent. Cote d'Ivoire went through mandatory mobile SIM registration with a biometric ID (for national security) but it did impact on those who didn’t have a biometric ID. Most importantly, it meant they didn’t have access to mobile money.

H: Really the government were trying to push people to get the new biometric ID, and using that specific threat of cutting your mobile line is very strong. In our research, we’ve often found that one of the main drivers to getting an ID in the first place was to be able to own a SIM, so you see how strong that threat is. In addition, mobile money is dependent on your SIM so if you don't have access to a phone line then you can't use mobile money services (e.g. you can’t receive or send money, you can’t take small loans or make savings, through the platform).

S: As a result, those who wouldn’t register for a biometric ID, would have to go through someone else to get their money, which becomes really risky. Researching these issues has made me realise that ID is the foundation for everything.

Yes, and you hear of women having problems travelling with children if they haven’t changed their name.

H: There’s a big - not explored enough - issue with marriage, changing names, movement after marriage wherever you are in the world. In Kenya you choose whether you keep your father’s name or take your husbands, this decision can have significant impact later on.

S: Coming full circle on the women and ID issue you’re talking to us about - I do wonder if women face ID issues more than men, which is worsened by the lack of clarity on who do you go to for help (what we talk about in our blog). Just my example again, when my husband and I were trying to get married in the UK, as he was not a British national we faced a lot of challenges - we ran around asking so many different organisations, lost time from work, spent money on travel, but we were just ultimately reliant on individuals helping (or not!). Those who do are the true heroes keeping it together. In contrast, women are not supported in other countries always, which is why I keep going on about intermediaries - and that’s where the role of NGOs and females in advisory committees is so essential. There’s still a lot of both research and policy work for us to do when we talk of women and identification in a digital age.

Find them at @SavitaBaliur and @HeleneSmertnik

Read more of their work at cariboudigital.net

-

Francesca Hobson posted an article

Rising to the challenge of #GoodID

Emma Lindley, of Women in Identity, explains why diversity in the Identity sector goes far beyond si see moreEmma Lindley, of Women in Identity, explains why diversity in the Identity sector goes far beyond simply supporting the progress of the women who work in it.

This week multiple organisations including ID4 Africa, Unicef, World Bank Group, Omidyar Network, Women in Identity and other communities have come together to promote the campaign of a verifiable identity for every citizen across the globe.

The 16th of September was International Identity Day and, today, September 19th, hundreds of delegates will meet in New York at the ID2020 summit to discuss how to address the fundamental issue that over 1.1bn people have no formal means of proving who they are - that’s 1 in 7 people. But it doesn’t stop there.

The World Bank estimates that in low income countries over 45% of women lack a foundational ID, which leads to a raft of socio-cultural issues that have far reaching implications for the inclusion of women and girls in our social and economic systems. How do we support women to gain foundational identity, to then empower them to build micro-businesses across places like Kenya or Bangladesh?

In their excellent blog, Identity is a human right … a woman’s right … Dr. Savita Bailur (Caribou Digital) Devina Srivastava (ID2020) and Hélène Smertnik (Caribou Digital) highlight that women specifically suffer from identity poverty. They challenge that we need to focus on ways of creating #goodID that considers socio-cultural issues, data privacy concerns and consumer access to relevant technologies, regardless of nationality, gender or financial status. More than ever, we need the collaboration and the community in those markets made up of governments, regulators, companies as well as the technologists who will produce the identity systems of the future.

Is the Identity industry ready for #GoodID?

New identity “solutions” emerge every day, and yet despite them being developed in places like London and Silicon Valley with high levels of diversity, we still see many start-ups and established companies with little or no level of diversity in the teams building these solutions - they're all people from the same country, same socio-economic background, the same culture and same gender. When it comes to developing solutions that can be truly viewed as global, do we really understand the problem we are trying to solve? And do we have the right mix of people involved in helping us understand?

In a study on gender diversity, the UNESCO Institute for Statistics examined the gender gap in science and found that, worldwide, only 28% of science professionals are women. In Sub-Saharan Africa, only 30% of women are exploring careers in STEM and in Silicon Valley, 76% of technical jobs are held by men. (Source: Forbes). But it’s not just gender diversity that is the issue. In the UK just 8.5% of senior leaders in technology are from a minority background.

The identity industry is no different. We do not have enough diversity, particularly at the coal face of product development. And this leads to the introduction of bias - a direct challenge to the aspirations of #GoodID.

We all apply natural biases through our daily lives - in our hiring and buying decisions, reviews and even in casual interactions. However well-intentioned we may be, our unconscious biases perpetuate stereotypes.

If everyone on your team looks the same and is from a similar background, you may reach consensus quicker on the main priorities for the company. But are those decisions the right ones? If your identity solution is designed to help recognise users that might struggle to prove their identity, how many of your team understand what it means to have no financial footprint or to live on benefits in social housing?

You may have a healthy representation of women across the organisation. But if they all tend to hail from the same cultural or educational background as male colleagues in similar roles, you’re not going to get much diversity of thinking when it comes to problem solving.

The effects of bias

We are now starting to see the effects of bias within technology. And many of the solutions under analysis sit within or adjacent to the identity industry.

A study by MIT researcher Joy Boulamwini found that some facial-recognition systems produced an error rate of 0.8% for light-skinned men. This error rate increased when a white female face was shown and ballooned to 34.7% for dark-skinned women.

According to the study, researchers at a major U.S. technology company claimed an accuracy rate of more than 97% for a facial-recognition system they’d designed. But the data set used to assess its performance was 77% percent male and 83% white.

If the systems that run our banks, public services, travel companies and retailers are designed and tested with this level of obvious bias, the impact on all economies will be huge. In a world focused on driving efficiencies through the adoption of digital services, we need to ensure we are building for greater inclusion. It is an area that digital identity needs to focus on.

Can diversity help?

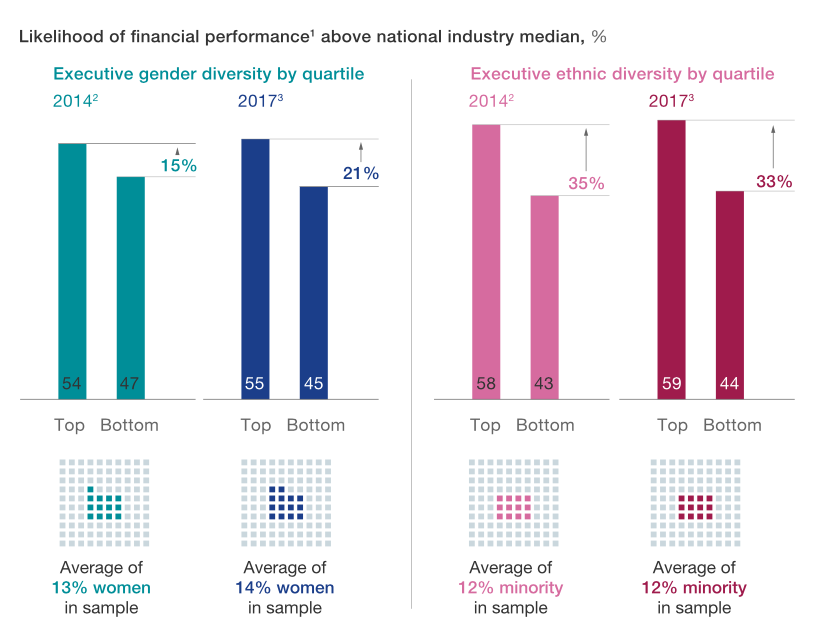

A study by the Boston Consulting Group (BCG) found that diversity increases the bottom line for companies. In both developing and developed economies, companies with above-average diversity on their leadership teams report a greater payoff from innovation and higher EBIT margins. The study found that "increasing the diversity of leadership teams leads to more and better innovation and improved financial performance." Companies that have more diverse management teams also have 19% higher revenue due to innovation.

McKinsey Delivering Through Diversity

Additionally, research by McKinsey, Delivering Through Diversity, reaffirms the global relevance of the link between diversity (a greater proportion of women and a more mixed ethnic and cultural composition in the leadership of large companies) and company financial performance,. McKinsey measured not only profitability but also longer-term value creation, exploring diversity at different levels of the organisation and considering a broader understanding of diversity beyond gender and ethnicity.

These findings are hugely significant for tech companies, particularly given the number of start-ups where innovation is the key to growth. It shows that diversity is not just an aspirational metric; it is actually an integral part of any successful public, private or not-for-profit organisation.

What does this mean for the Identity industry?

When we think about this in the context of identity solutions, the need for diversity is even more fundamental. We deal in humanity and humanity is diverse; so the solutions we develop need to be able to recognise and embrace this diversity.

As an industry, we are developing standards, technologies and solutions aimed at confirming people’s identity. So we need to consider also how we include those we aim to identify within our teams: in the design, testing and deployment of products and services.

Digital identity solutions built for everyone should be built by everyone.

The bottom line is: we’re failing as a community to build the diverse, inclusive teams that are best equipped to tackle the world’s identity challenges. And, as a result we run the very real risk of failing to provide the products and services the world's population actually needs. So, join the campaign for #GoodID by sharing this blog and ensuring your voice is included.

Author

Emma Lindley

Emma Lindley is co-founder of Women in Identity a not for profit organisation focused on developing talent and diversity in the identity industry, and executive advisor on digital identity for Truststamp a provider of privacy protecting technology for the identity industry.

Over a career of 16 years in identity Emma has held various roles, most recently as Head of Identity and Risk at Visa, previous board level roles at Confyrm, Innovate Identity and The Open Identity Exchange, and was instrumental in the commercial development of GB Group’s position in the identity market back in 2003.

She has been recognised in the Innovate Finance Powerlist for Women 2016 and 2017, KNOW Identity Top 100 leaders in Identity in 2017, 2018 and 2019, 100 Women in Tech Awards 2019, and was voted CEO of the year at the KNOW Identity Awards. She has an MBA from Manchester Business School and completed her thesis in Competitive Strategy in the Identity Market.

-

Francesca Hobson posted an article

Have We Reached Peak Privacy?

A celebration of data privacy month and how privacy needs to remain at the forefront of IAM. see moreA celebration of data privacy month and how privacy needs to remain at the forefront of IAM.

Data privacy has made big headlines in the last 12-months. Wherever we look there is an article about a data breach, a data protection regulation update, or a colleague talking about data privacy. It may have all gotten too much and we have to ask ourselves - have we reached “peak privacy”?

In the identity space, data privacy was never really a consideration until we entered the realms of the consumer. In enterprise IAM, although we were, in fact, using the personal data of our employees, privacy was rarely, if ever, mentioned. When the enterprise perimeter earthquake happened, and we moved our IAM services to cover consumers and citizens, data privacy started to enter the industry parlance.

Why it is important to not become jaded about privacy

Data breaches and privacy violations can almost be thought of as a kind of ‘digital trauma’. When I heard about the Collective #1 data breach which exposed 773 million data records, I just thought, “Oh no, not again”. I searched HaveIBeenPwned and sure enough, my email address showed I was part of the data breach. But I didn’t feel worried, as I should be because I have become desensitized.

Desensitization is a common issue amongst people who experience trauma. So, for example, teenagers who are subjected to real-life violence become less affected by acts of violence than their counterparts. If you experience something over and over you do get used to it happening. That does not, however, mean that it should be tolerated.

As I write, there will be continued breaches that affect our personal data. GDPR helps to focus the mind of organization leaders, but it does not stop cybercriminals trying to get at our personal data. Since the GDPR come into effect, Law firm, DLA Piper have recorded, 59,000 personal data breaches across Europe.

As custodians and processors of personal data, we can’t just turn a blind eye to privacy. It hurts our businesses as much as it hurts the customer who forgoes privacy. A report by Privitar said that 90 percent of consumers are concerned that technological advancements are a risk to data privacy.

Tech and privacy - A good double act in IAM?

IAM platforms have needed to innovate to keep up with the tidal wave of personal data and to improve customer experience. Data is an incredibly useful commodity that can be used to do online jobs, including make onboarding processes digital. Privacy, as seen through the lens of the IAM technology stack, should be intrinsic across a platform. But what does that mean in practical terms, can we have our privacy cake, and eat it?

Privacy peak 1: Great UI/UX can facilitate good data privacy

The touchpoint between the identity management backend and the user is where the data privacy choice begins. It is also where your relationship with the customer begins. Privacy is an intrinsic part of trust which is a relationship building tool. Your UX should guide your customers down a pathway that distills privacy for them. The UI should reflect the data processing you do in a simple way. If you do this, you start on a pathway to trust by being privacy respectful.

Privacy peak 2: Deliver what you promise

If you tell users you won’t use their data for X or Y, then don’t. If you tell user’s you will use their data to give them a better service, do so. This type of basic thinking has to be part of the design process at the beginning of building a service. If you have to retro-fit it, it is harder to do, but not impossible. Using identity API-based service architecture can help to facilitate the addition of missing features that enhance privacy.

Privacy peak 3: Consent is fluid

Consent management comes in many forms. You should have already taken consent when you first touched the customer’s data. However, consent is fluid. People change their minds. Build consent for data privacy control into the system, end to end. This can be included in transaction consent - OAuth 2.0 and UMA are example protocols for achieving this. Consent management can also be included in the user’s account manager. Consumer IAM vendors are now beginning to add in the ability to manage consents across services. Even the blockchain can add value here - used as a layer for consent transaction receipt and audit, it offers an immutable way to show that you have taken the consent requirements of the GDPR seriously.

Privacy peak 4: Technology is the friend of privacy

Privacy is about individual choice, but data privacy is augmented and enforced using technology solutions. Always use the best possible security solutions to enforce the privacy choices of your customers. Make these as seamless as possible. This can be a challenge in certain customer-facing areas, like authentication. But the world of authentication is starting to offer solutions to the conundrum of usability vs. security. Other areas, like data in transit and at rest should be secured, by design, in any system that moves personal data, in all of its forms, around.

Let’s make data privacy month data privacy by default

Data Privacy Day has now become Data Privacy Month which runs throughout February. As custodians of people's data, we should never, ever, be desensitized or complacent about data privacy. Data privacy holds the key to the relationship we need to build between our service and our customer. Privacy is not about hiding data, it is about using it with due respect to the person that data represents. When you next set out an RFP for an identity service, make sure you add in a requirement that asks for privacy by default.

Author

Susan Morrow

Having worked in cybersecurity, identity, and data privacy for around 35 years, Susan has seen technology come and go; but one thing is constant – human behaviour. She works to bring technology and humans together.

Find her @avocoidentity

-

Francesca Hobson posted an article

Three Questions Around Self Sovereign Identity

If you work in the area of identity you will have noticed a lot of talk about Self Sovereign Identit see moreIs Self Sovereign Identity a panacea or an also ran?

If you work in the area of identity you will have noticed a lot of talk about Self Sovereign Identity (SSI). As a concept, it applies the goal of placing the user at the centre of digital identity management and control. User-centric digital identity is not a new idea. I first came across it back in 2008 when I read Kim Cameron’s 7 Laws of Identity – the piece itself going back to 2005; law 1 states that “ No one is as pivotal to the success of the identity metasystem as the individual who uses it.”

SSI is user-centric, but you don’t need to have a Self Sovereign ID system for it to be user-centric.

On paper, I like the idea of a Self Sovereign Identity. After all, digital identity is about what you do with the information that makes up who you are – surely that should be under your control? Yet still, I have lingering questions that make me question the ability of SSI to fulfil my identity needs.

A really quick bit on what SSI is?

This isn’t a post about what Self Sovereign Identity is, there are plenty of articles on that topic. But I will give you a very quick and dirty overview of what the technology is about.

SSI is fundamentally reliant on blockchain to register the attributes of a person’s identity. What does that mean. Your identity data (attributes or claims) – the stuff that determines your digital you, or that thing is that thing – are registered to a block on a blockchain. The blockchain is a distributed ledger, aka it has no central authority controlling it, it is decentralized. The subsequent decentralized claims are then part of a person’s identifying data that they can share, under their control, with a requesting party – like a bank or a government service, etc.

The substance of the SSI is based on the idea of verifiable claims. If you follow my blog you’ll know that verification is a thorny issue in the digital identity space. It is certainly not straightforward and can do with a sprinkle of ‘user friendly’ if you ask me. But organizations like Sovrin, who are offering a backbone for SSI, are built upon the notion of verifiable claims being managed through a distributed ledger technology backbone specifically attuned to digital identity.

Verifiable claims

I just want to talk a little about the notion of a verifiable claim. For a piece of data on an individual to carry any weight it has to be true or at least have a probability of truth that satisfies the service provider. Claims that are checked (verified) by a trusted third party are deemed to be verifiable. Web standards custodians, W3C, have looked at the issues around standards for verifiable claims. The research findings of the group come down heavily on the side of user-centric and privacy enhanced. There is a very strong value statement driving their work “No User-Centric, Privacy-Enhancing Ecosystem Exists for Verifiable Claims”.

The research concludes several things including:

“Trust is decentralized. Consumers of verifiable claims decide which issuers to trust.”

And

“Users may share verifiable claims without revealing the intended recipient to the software agent they use to store the claims.”

But, in the context of this article, do you need a decentralized identity system to have decentralized verifiable claims? Are the two mutually exclusive?

The questions on SSI I need to have answered

Commercial use cases?

We live in a world that is built upon certain commercial structures. These structures are pretty much universally driven by money. I want to understand how we can fit an identity framework, that is based on presenting verifiable claims, to a service. Who will pay for the verification? If one organization pays, will they be happy if that data is then shared with a competitor to build up a trusted relationship with them?

Are we back to the same issues we had with federated identity? As Phillip Windley said back in 2006: “Not surprisingly, the hard part isn’t usually the technology. Rather, the hard part is governing the processes and business relationships to ensure that the federation is reliable, secure, and affords appropriate privacy protections.”

Will Self Sovereign Systems come up with similar commercial issues – the business relationships, but this time from a pay for use basis?

An interesting look at how this could be solved is from the Web of Trust working group and their work in progress treatise “How SSI Will Survive Capitalism”. Something I will be keeping a close eye on. This is my main concern from their SWOT analysis “Lack of upfront financing due to lack of platform (chicken & egg problem)”

And a last point before I move on. This was brought up by a government official in the UK – the data ownership – is a government verified identity document like a passport actually your data to own?

This governance thing?

I’m also not sure about the whole SSI being a magical panacea for refugees. There is a nagging feeling in the back of my head around the ‘stewards’ model. Self Sovereign frameworks like Sovrin use a steward’s model to maintain trust. The stewards are trusted third parties – organizations, that operate the nodes in the distributed ledger. Sovrin currently has over 50 stewards that provide human and computing power.

I can see the positive aspect of this. It extends the notion of decentralization to another layer. Good. I do, however, wonder if the steward will become a weak point in the system. Will cybercriminals target stewards to gain control of the nodes?

Privacy, really?

The privacy aspects of decentralized, SSI are part of the charm of the system. Sovrin, for example, uses Zero Knowledge Proof as the underlying mechanisms of minimal disclosure of data. ‘Are you over 18? Only Yes/No is revealed. Of course, SSI isn’t the only system that offers privacy of attributes. There are several ways of achieving the same thing using traditional identity services. One such mechanism was developed by Sid Sidner back in 2006, and named “Variable Claims”. I’ve seen it applied in a traditional identity service – it works in a similar manner by only revealing certain data, i.e., yes/no or partial reveal of attributes.

The problem is this. It is all well and good having minimal disclosure. But what if you want to buy a pair of shoes online. You have to allow the online vendor to know your address to send the shoes to. They will likely also want your name and other demographic data if they can get consent, for marketing purposes. Your data is then outside the SSI and held in a more traditional manner. And…it is now outside of your control too.

“Options make for a healthy ecosystem” – Tim Bouma

I remember looking at Pretty Good Privacy (PGP) way back. It offered the hope of secure email communications based on the idea of a “web of trust”. PGP always seemed very ‘techie’ to me; you virtually needed a PhD in computer science to use it. Usability, rather than methodology has probably killed PGP – even Phil Zimmerman who invented PGP doesn’t use it anymore. I get the same ‘techie’ feel of PGP within the SSI movement. I know that folks in SSI are working hard to get neat apps together to help with usability, but still, there is an air of PGP about it. I can’t shake it, I want to. I think it comes down to this.

We need to understand the true nature of why we use digital identity, the real use cases, the pitfalls of such use cases, as much as we need the technology to make them happen.

I do not, however, want to write a technology off, just because I have a few unanswered questions. I can see, for example, that blockchain has some use cases that fit well and as an additional layer in a tech stack it has enormous potential.

Tim Bouma, Senior Policy Analyst for Identity Management at Treasury Board Secretariat of the Government of Canada, recently summed up the SSI debate perfectly, and I agree wholeheartedly with his very pragmatic take. Tim explores technology with open eyes and the hard head of experience. He said in a recent tweet and Medium post on SSI:

“The extreme (decentralized) case is no service provider, but likely it will be a mix of centralized, federated and decentralized options. That’s ok because options make for a healthy ecosystem.”

SSI is on the extreme end of the digital identity spectrum. Its focus is putting control back in the hands of you, the user. But SSI is not the only way to skin a cat. My own view is that a mix of technologies will, at least for the foreseeable future, be needed to accommodate the vast array of needs across the identity ecosystem. I can see use cases for SSI. But will it become the overarching way that humans resolve themselves in a digital realm? I don’t know, I don’t have a crystal ball, but my gut says not, unless there are compelling answers to the three questions I have listed above. Maybe the SSI community can help me to understand?

Author

Susan Morrow

Having worked in cybersecurity, identity, and data privacy for around 35 years, Susan has seen technology come and go; but one thing is constant – human behaviour. She works to bring technology and humans together.

Find her @avocoidentity

-

Francesca Hobson posted an article

Can the re-use of identity data be a silver bullet for industry?

Can a “make do and mend” ethos work to make digital identity universal? The number of conferences th see moreCan a “make do and mend” ethos work to make digital identity universal?

The number of conferences that focus on digital identity has increased several-fold since I first became involved in the space. Yet at a recent conference, a colleague heard someone say ”…here we are, 20 years on, and we are still no further forward in creating a digital identity usable by all”.

The elusive nature of the identity ‘silver-bullet’ continues to haunt the industry. Identity specialists the world over are talking at conferences, in meetings, on social media, trying to find a solution. They are pulling together ideas and thoughts on how to make identity accessible for all and usable across a complicated ecosystem of stakeholders.

But the problem continues, why is digital identity still a hornet’s nest of interoperability issues and disparate systems?

Identity landscape – what’s going on

The current identity landscape can be described as ‘fluid’. There are many approaches across many different use cases; it really is a mixed bag of solutions. If an organization puts out a tender for an identity solution, they best make sure that their requirements list reflects closely what they want, as they will get a rainbow of options in response.

In a very general way, you can break down the identity landscape like this:

Citizen Identity: There are a lot of governments either already playing in the citizen ID space or preparing to. In the UK, for example, the Verify scheme is now about 6-years old has over 4 million users who use it with about 19 government services. But there it stays, it has still yet to find any commercial re-use.

ID Mobile Apps – like Yoti, offer a mobile device-based identity that can be used with participants in their ecosystem. Yoti had over 3.7 million users as of May 2019 and hundreds of relying parties consuming the Yoti ID. There are quite a few ID apps appearing, including Verified.me from SecureKey. Another worth mentioning, but that is in early stages, is a collaboration between Mastercard and Samsung to deliver a “…better way for people to conveniently and securely verify their digital identity on the mobile devices”. But again, apps have specific use cases and tend to stay in a confined ecosystem but have great potential for re-use.

Social and federated accounts – Facebook, Google, Amazon, and similar are not really thought of as ‘identities’, but often contain some or all of the data needed when creating a digital identity elsewhere. These accounts have massive potential for re-use across a wider ecosystem.

CIAM platforms – there are a number of players in this area, people like Okta, Ping, Janrain, and Forgerock. They offer platforms that cover a remit of customer marketing and analytics alongside more traditional IAM requirements. They are usually based on standard protocols so could work in a wider ecosystem.

Identity services and APIs – this can cover a lot of ground, but one of the more promising areas being offered is in the connectivity of all of the players in an identity landscape. Companies like Avoco Secure and SecureKey offer technology that can link ecosystem components together to build the interoperability layer.

Self-Sovereign Identity (SSI) – coming up on the inside is SSI. This decentralized approach to identity is all about putting identity back in the hands of the user. However, questions around the commercial use of SSI are still left unanswered.

How can we solve a problem like identity?

As you can see, the identity landscape is complex and there are a lot of moving parts. The main hurdle to creating a Shangri-La for the identity space is the very disparate, disconnected, non-interoperable playground that we see today.

We have created a situation where a digital identity, which is a reflection of an individual, is being split into thousands of fractions; each disconnected, often siloed and placed into closed systems.

The result is thousands of repeated data snippets. This is one of the reasons why personal data theft is so easy and so rife.

This was recently summed up by Alastair Campbell of HSBC bank at an OIX event in London where he said

“Creating a vibrant marketplace together rather than a ‘winner-takes-all’ – that’s what we should all be interested in”

We have to move from this fractured place to a culture of re-use.

The old “make do and mend” ethos needs to find its digital counterpart in the world of digital identity. Here are some ideas on making this work:

Federation and re-use: The identity world is made up of silos of offerings across multiple vendors. But digital identity should not work like this. Digital identity really is an ecosystem. Any identity should be transferable across any relying party that needs it. Creating a ‘closed-shop’ in digital identity is doomed to fail. Ecosystems should be built to allow existing identities and identity data to be drawn in and re-used. Apps like Yoti and digi.me, platforms, including Ping, and citizen ID such as Verify and eIDAS, can be plugged in and offered up to whoever needs the data.

Uplift: The ecosystem needs to be able to accommodate new data that adds weight to the re-used IDs if needed.

Events: Often it isn’t about who you are but what it is you’re trying to do. Identity allows us to do jobs online and these can be event-driven.

Frameworks and rules: The legal basis for allowing re-use of existing identity needs to be looked at. This should focus on the interoperability layer. There are bound to be cases where competitors need to block the use of certain identity apps or platforms. This does not negate the general use of reusable identities within a wider ecosystem. But it does allow for micro-ecosystems to be created.

The identity ecosystem should be about creating flexible IDs around achievable business models; that offer value to the user and the service consuming the ID. After all, it isn’t very often you want an actual ID. Usually, you just need the answer to a question e.g., “are you over 18 so you can buy this age-restricted product?”

Finding a Cure for Identity

The reuse of existing identity accounts may well hold the key to solving the issue of a disparate identity world. Allowing all to play, will act to open up this closed system. Government identity initiatives will be able to find a commercial use case and even an ROI. What’s key is collaboration via the likes of industry bodies such as OIX and Kantara.

Organizations like Kantara do sterling work on creating standards in the identity space. But this work needs to also be augmented with a holistic view of how to pull identity out of the silos and into the wider world.

A final word from Analyst Martin Kuppinger at the recent European Identity & Cloud Conference 2019 sums the situation up:

“Aim to connect to identities – not manage them yourself, orchestrate services and don’t invent what already exists, segregate data from applications so that it can be used and is not locked”.

Originally posted on www.csoonline.com

Author

Susan Morrow

Having worked in cybersecurity, identity, and data privacy for around 35 years, Susan has seen technology come and go; but one thing is constant – human behaviour. She works to bring technology and humans together.

Find her @avocoidentity

-

Francesca Hobson posted an article

Should We Worry About the IoT Being Used as a Weapon of Mass Control?